After running with WCF based SOA in our production for a year or so, we have been working on our next major version. We have added some new services lately.

in the load test, we encounter the following error:

System.Net.Sockets.SocketException: Only one usage of each socket address (protocol/network address/port) is normally permitted

After some investigation (See Durgaprasad Gorti's WebLog) we understood that as we load huge amount of request on our middle tier application (actually ASP.NET web service) it send lots of requests to other services in our distributed system. We have used TCPView to see what’s going on with the ports – and saw that each request have opened a new client port – which was never reused until the OS released it 4 minutes later.

We have changed the TCPTimedWaitDelay value in the registry to 30 seconds – and it solve the problem.

we decided, however, that this is not a real solution – just a workaround. the real problem is the no reuse of ports – and we need to solve it –as we don’t know when we will hit the wall next time.

Our code used Client Proxy generated by Svcutil.exe, and we have created a new client each time a request was needed:

System.ServiceModel.ClientBase<T> clientProxy = Activator.CreateInstance(proxyType, new object[] { endpoint.Binding, endpoint.Address }) as System.ServiceModel.ClientBase<T>;

Now, one of our developers said that in .NET 3.5 (actually .NET 3.0 SP1) Microsoft has added some kind of caching which should have solved this issue.

As we were running on .NET 3.5 SP1 environment – this clearly hasn’t solved the problem. So we went to look for the reason. It hasn’t took long to find Wenlong Dong's Blog about “Performance Improvement for WCF Client Proxy Creation in .NET 3.5 and Best Practices”.

It was very clear from his blog, that we fail to use the built in caching because we use a constructor with Binding as a parameter. Actually, even if weren’t used a constructor that disable the caching – it was disabled because we accessed the ChannelFactory property too:

clientProxy.ChannelFactory.Endpoint.Behaviors.Add(epb);

We wanted to fix this by calling a different constructor and by adding the behavior to our configuration, but we couldn’t, as our configuration isn’t based on config file, but is read from a central DB – and there is no constructor that doesn’t read configuration from config files (there should be such constructor in 4.0 – See this new constructor).

You can work around this in various ways, like this one by Pablo M. Cibraro.

Read also in great details how the client actually work – at What WCF client does

Anyway, we have decide to different direction.

If you read Microsoft’s Middle-Tier Client Applications, you’ll see 2 options:

- Cache the WCF client object and reuse it for subsequent calls where possible.

- Create a ChannelFactory object and then use that object to create new WCF client channel objects for each call.

Clearly, the first option… is not an option, as it talks about subsequent calls.

We need robust multi threaded middle tier.

So we were left with only one valid solution – to use ChannelFactory instead of the Client proxy, and to cache it (actually redoing the caching mechanism Microsoft added for the ClientBase).

We can succeed caching in the place the framework fails, because we allow ourselves to take some assumptions regarding the reuse of the same ChannelFactory.

We use the cached factory (if not exist – we create one) to return a Typed channel by calling its CreateChannel(). This open a new socket for each request, unless already used socket were closed and available for reuse (You should of course close each created channel, by calling its Close() method).

This is what we wanted.

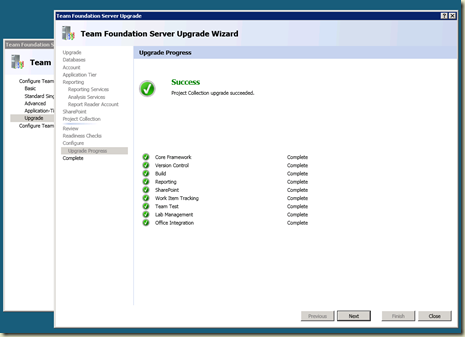

The load test finished successfully.

Thanks Daniel, Michael & Eyal.